Building a 7-Dimension ACL Framework for 25 AI Agents

Traditional ACL asks who can access what. Agent ACL has to answer across seven dimensions at once. Here's the 175-element matrix we built for BUCC and why default-deny is the only model that survives contact with production.

Your AI agents already have access to your entire infrastructure. Database credentials. API keys. Customer PII. Source code. Financial systems. External communication channels. One compromised agent is everything exposed at once.

The industry's answer to this is usually a single sentence: "we scoped the API key." That's not an access control model. That's a shrug.

The diagnosis: single-dimension ACL is a category error

Traditional ACL asks one question: who can access what? Users, groups, roles, resources. That model was designed for humans and services that do one thing. It does not survive contact with an autonomous agent.

An agent isn't a principal. It's a process that reads memory, calls tools, hits APIs, handles data of multiple sensitivities, works on several projects in parallel, and reaches into external communication channels, all in the same turn. Asking "who can access what" collapses six other questions into a single flag. The moment you scale past two agents, the model breaks.

We asked a different question: what does access control have to answer when the principal is an agent?

What we built: a 7-dimension ACL matrix

BUCC enforces access control across seven independent dimensions, evaluated on every agent action. The matrix is 25 agents × 7 dimensions = 175 elements, PostgreSQL-backed, cached at 60-second TTL for hot-path latency, and default-deny everywhere.

- Dimension 1,

MEMORY_READ. Which memory tiers and which other agents' scopes can this agent read from? Tier 1 (global), Tier 2 (agent-specific), Tier 3 (session) are all independently gated. Most cross-agent memory reads are blocked by default. Information leakage between agents is an explicit choice, not a side effect. - Dimension 2,

MEMORY_WRITE. Can this agent write into another agent's memory? Almost always no. Write access is the attack surface for cache poisoning, false-context injection, and one agent silently manipulating another's reasoning. Read-only is the baseline; write is a named exception. - Dimension 3,

TOOL_ACCESS. Which of the integrated tools can this agent invoke? Finance agents reach Vaultline; cybersecurity agents don't. Researchers get DeepSearch and SynthQuery; finance agents don't. Browser agents get per-domain allowlists; sandbox agents get none. Every tool binding is one cell in the matrix. - Dimension 4,

API_ACCESS. Which of the 390+ backend endpoints across 58 routers can this agent hit? Marketing agents can reach/api/content,/api/social,/api/analytics. Finance agents reach/api/finance,/api/reporting. Cross-access is denied at the gateway, not at the router. The enforcement is route-level, not intent-level. - Dimension 5,

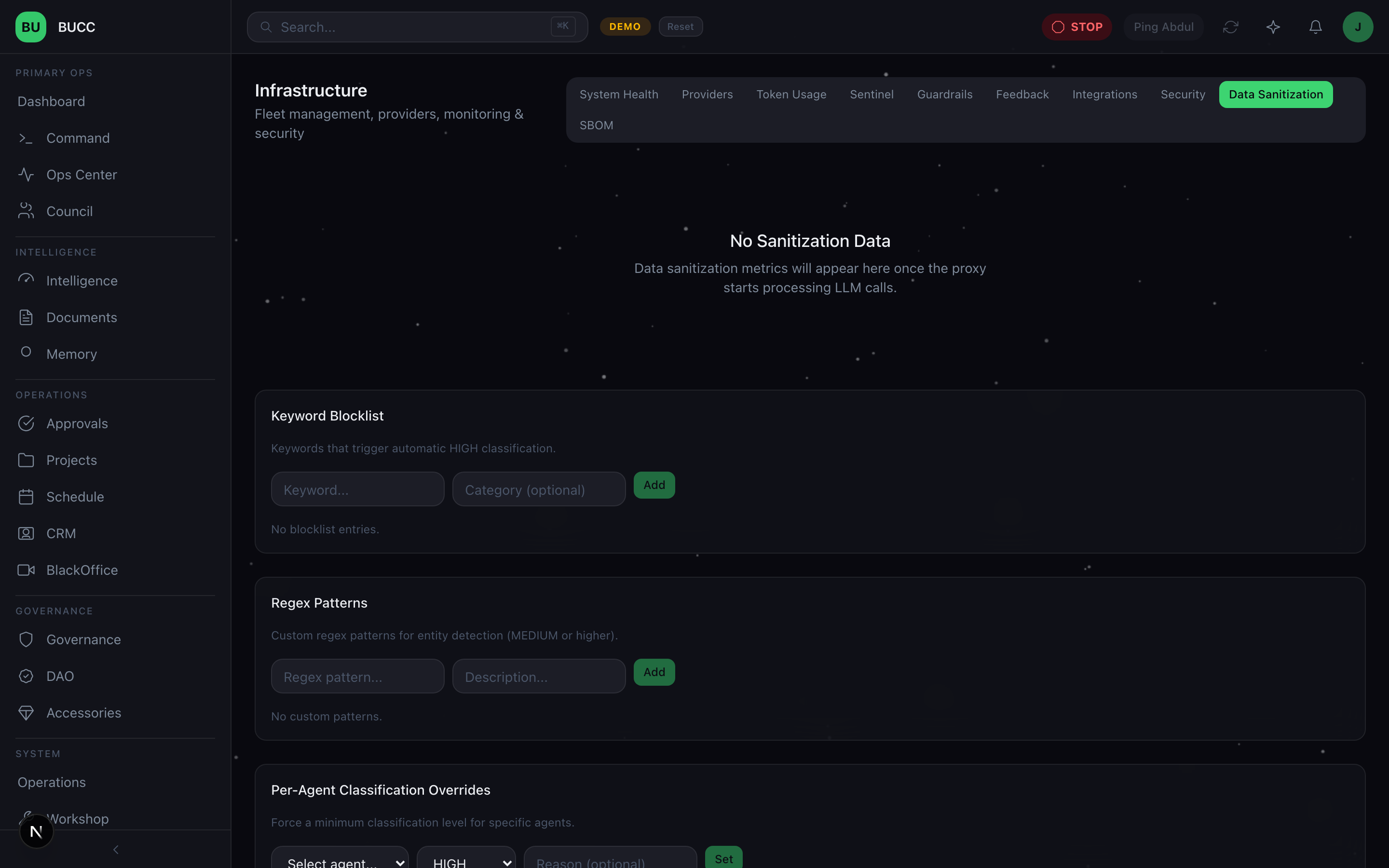

DATA_CLASSIFICATION. Can this agent touchHIGH,MEDIUM, orLOWsensitivity data?HIGHmeans customer PII, financial records, source code, those requests are pinned to L1 local models through the Data Sanitization Proxy and never touch mem0 vector storage.MEDIUMis internal product context.LOWis marketing copy and blog drafts. The classification travels with the data, not with the agent. - Dimension 6,

PROJECT_SCOPE. Which projects is this agent assigned to? An agent with tool access to DeepSearch and data-class access toMEDIUMstill cannot read Project D's research if it was only assigned to Projects A–C. Tool permission plus data class is not enough, project scope is a distinct gate. - Dimension 7,

COMMUNICATION. Which outbound channels is this agent allowed to speak through? Finance and cybersecurity agents are blocked from PulseChat, ChatBridge, and external email, internal ChatOps only. Marketing agents can post to social. Sales agents can send customer email. Every outbound byte crosses exactly one of these gates.

The enforcement loop

Every agent action triggers a lookup against the matrix before it touches anything. The path is deliberate and short:

- Agent emits an intent (read memory, call tool, hit endpoint, classify data, scope to project, send message).

- The gateway classifies the intent against the seven dimensions.

- Each dimension is checked independently against the cached ACL row for that agent.

- A denial on any dimension fails the whole action closed.

- The check, the result, and the reason are logged to the audit trail.

Default-deny is non-negotiable. If a dimension has no explicit allow, the action fails. There is no "wildcard" fallback. There is no "admin" agent. The matrix is the only source of truth.

The principles behind the matrix

Seven dimensions, not one. We landed on seven because each represents an attack surface a single-dimension model cannot express. Collapse any of them and you create a class of actions the system can't reason about. A five-dimension model would let an agent with the right tool access exfiltrate the wrong data class. A three-dimension model would let the wrong project read the right memory. Seven is the smallest number that covers the full shape of "what an agent can do."

Default-deny, whitelist-only. Every cell in the 175-element matrix starts at DENY. Access is granted explicitly, per-agent, per-dimension. This is the opposite of how most teams provision agents, which is to grant broad access at the start and tighten later. Tightening later never happens. Default-deny inverts that entropy gradient.

Per-agent granularity, not per-role. Roles look tempting when the fleet is small. They break the moment two agents with the same job have different trust levels. We collapsed roles into direct per-agent grants. Twenty-five agents × seven dimensions is a spreadsheet, not an abstraction problem.

Cache, but don't lie. A 60-second TTL cache sits in front of the matrix because the hot path cannot afford a PostgreSQL lookup on every token. But cache invalidation is immediate on grant revocation, a revoked permission is never served stale. Lag on adding access is acceptable. Lag on removing access is a breach window.

Every denial is evidence. ACL denials are not noise. They are the single best signal we have for an agent behaving unexpectedly. A sudden spike in denials from one agent is the Sentinel's top-priority alert. Most of the time it's a misconfiguration. Sometimes it's the first sign of something actually wrong. Either way, you want to know.

The catch we hit

Every action triggers a lookup. Every lookup adds latency. The 60-second TTL cache absorbs most of it, but you still pay on cold paths and on revocation events. Too fine-grained and your agents are I/O-bound on their own permission checks. Too coarse-grained and a single misgrant leaks across dimensions.

We landed on per-agent, per-dimension as the right granularity. Finer than that (per-agent, per-tool, per-endpoint) is what the matrix already expresses internally, the seven dimensions are the aggregation layer, not the storage layer. Coarser than that (role-based) collapses the attack surface we built the matrix to cover.

Containment is the point

The reason the matrix matters is not that it prevents all failures. It's that it contains failures. When one agent goes wrong, compromised, misconfigured, hallucinating, or just wrong about its own job, the blast radius is bounded by its row in the matrix. Not by the fleet's worst-case permissions. Not by the most trusted agent on the team. By exactly what that one agent was granted.

That's defense in depth, written as a data structure.

A builder's note on the stack

- Storage: PostgreSQL. The ACL matrix is a first-class schema, not a config file. Grants are migrations. Revocations are migrations. Every change is reviewable in git history.

- Cache: 60-second TTL, keyed on

(agent_id, dimension). Invalidation on revoke is immediate. Invalidation on grant is lazy. - Audit: Every check, pass or fail, lands in the central audit log. The Sentinel agent runs a daily sweep for anomalies.

- Enforcement: At the gateway, not at the router. Routers are allowed to assume the request is already authorized. Defense in depth means the deeper layers trust the outer layer, because the outer layer is the one that cannot be bypassed.

Further reading & standards

The 7-dimension matrix maps cleanly onto the published frameworks we operate against. If you're building against the same constraints, these are worth reading in full:

- OWASP Top 10 for LLM Applications, LLM08: Excessive Agency. The ACL matrix is our answer to LLM08. Every dimension removes a class of over-privileged action. (owasp.org/www-project-top-10-for-large-language-model-applications)

- NIST AI Risk Management Framework, GOVERN function (GV-1, GV-4). The matrix is a concrete, auditable implementation of the "risk management processes" NIST asks you to document. (nist.gov/itl/ai-risk-management-framework)

- EU AI Act, Article 15 (accuracy, robustness and cybersecurity). High-risk AI systems are required to implement "technical solutions addressing AI specific vulnerabilities." A per-agent, default-deny ACL matrix is one of them. (artificialintelligenceact.eu)

Read the rest of the series

- Day 1: Running 25 AI agents in production

- Day 2: Governance, not guardrails

- Day 3: Persistent agent memory

- Day 4: The Data Sanitization Proxy

- Day 5: The agent provisioning pipeline

- Day 6: Three-layer LLM routing

- Day 7: Catching AI hallucinations

- Bonus: Agent ACL framework (you are here)

- Bonus: Agent wallets & DAO governance

- Bonus: BlackOffice video pipeline

- Bonus: Control Debt Scoring

If you're building multi-agent systems, an ACL framework is not optional. It's the difference between we're running agents and we're running agents safely. Default-deny. Whitelist-only. Audit everything. Seven dimensions, not one.

This is a builder's journal, we're sharing the architecture because the community needs to see what production multi-agent security actually looks like, not what the demos promise.